While no doubt there are many clear-cut cases where such a policy is advisable and commendable, I wonder if many people trust YouTube and/or their team or algorithm or whatever/whoever is deciding what is "extreme" and what isn't to do the job of removing the videos. I've seen plenty of pro-ISIS/pro-Islamist terrorism Twitter accounts skate by with no repercussions (presumably because they primarily communicate in Arabic or other languages that the monitors don't/can't read or understand), while those of ex-Muslims who are now critical of the very movements that they themselves used to be a part of get deleted or suspended for "inciting racial hatred", "inciting violence", etc. From what I've heard in the ex-Muslim community on YouTube, it is much the same, with many videos being removed for pointing out the supremacist ideologies inherent in this or that hadith or section of the Qur'an (stuff about the Jews and Christians being "the sons of apes and pigs", while the Muslims are the "best of people ever created" or whatever). Surely there is some latitude regarding what is 'extreme' when it comes to religious texts written in the pre-modern era (so long as they aren't Christian in any way...), but if suddenly pointing out that the attitudes that some adopt as a result of their fidelity to their religion and its texts (or rather their understanding of it, I suppose) becomes in itself 'extremism', then I have very little hope that this kind of wide-reaching mandate will be anything but a breeding ground for abuse and enforcement of group-think.

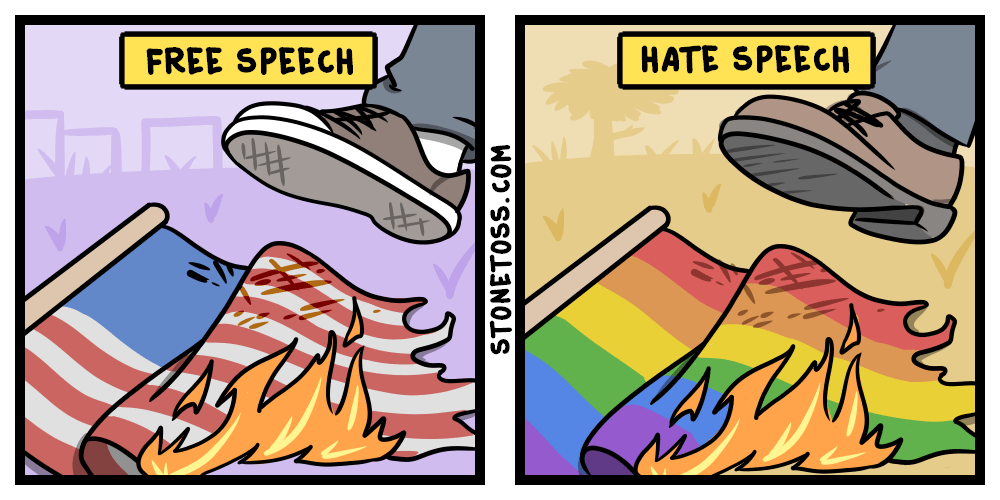

Since YouTube, et al. are so much of the 'digital public square' these days, I personally think they ought to be taken out of the hands of private companies and run like utilities. You can't cut off a guy's phone service because he's a Nazi, but you can prosecute him for racist hate crimes planned over said phone line. That sort of distinction would be great, rather than YouTube's current system wherein if a view is merely unpopular with a particularly loud segment of society, it gets flagged as 'hate' when what it really is is criticism that they do not like to hear, but that others should still be allowed to say, assuming that they don't say it in a way that itself invokes racial/ethnic/religious extremism. (And, yes, all of this should apply to Christians, too. Why wouldn't it? We're not strangers to having our religion criticized from every corner and under every possible guise, and anyone else should be too if they're going to use these platforms. I just fear that there will be some very bad unintended consequences of this very well intended plan, which may enable certain kinds of extremism to grow unchallenged.)